In Open vSwitch 101, I described the three main components that make up Open vSwitch (OVS) from an architectural standpoint, namely ovs-vswitchd, ovsdb-server, and the fast path kernel module. If you start to work with OVS, the first thing you realize is that it takes quite a bit more knowledge to really understand it. This post will focus on some design principles and options when running OVS on a hypervisor like KVM in conjunction with a network virtualization solution.

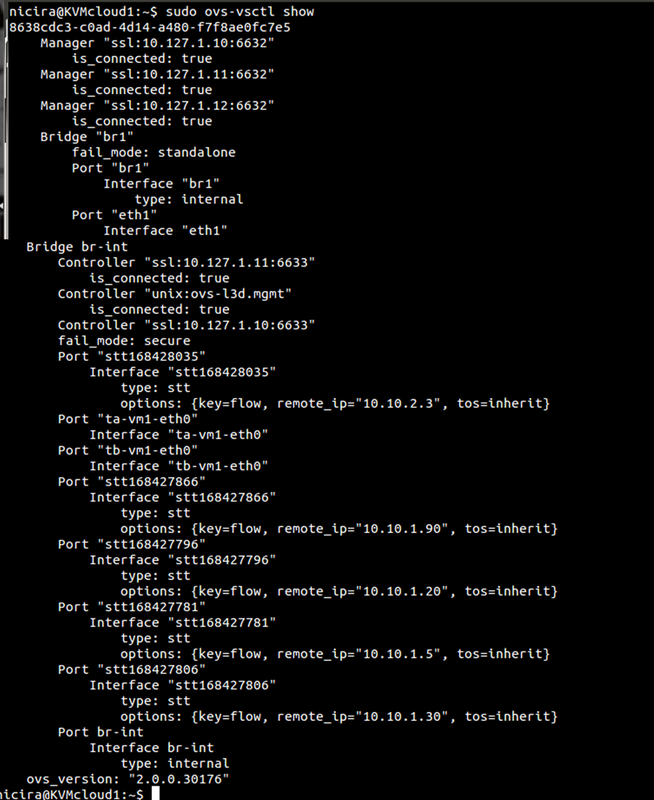

This example will also assume the use of an overlay network virtualization solution to be used for all VM-data traffic leaving the host that leverages OpenFlow/OVSDB to communicate between the controller and OVS.

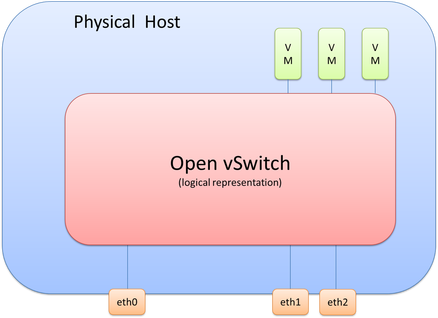

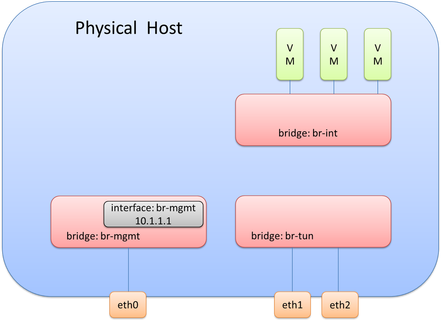

If we take this scenario, we know we will need to connect multiple virtual machines and three physical interfaces to OVS, which logically looks like the following diagram.

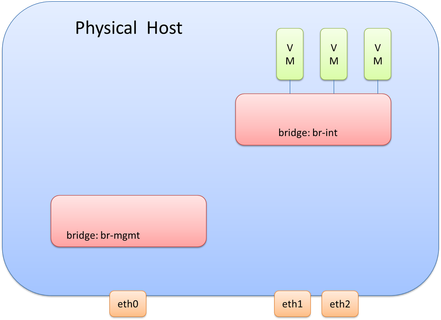

In deployments that leverage OVS in conjunction with OpenFlow/OVSDB network virtualization solutions like NSX or even with OpenDaylight+OVS, it is good practice to separate the bridge that the VMs connect to vs. the bridge(s) for everything else. One reason for this is that the SDN or Network Virtualization controller will be making changes to the bridge and it is good practice to isolate these changes to a single bridge that only the VMs connect to. The bridge that the VMs connect to is called the integration bridge and is often identified as ‘br-int’ when on the Linux command line.

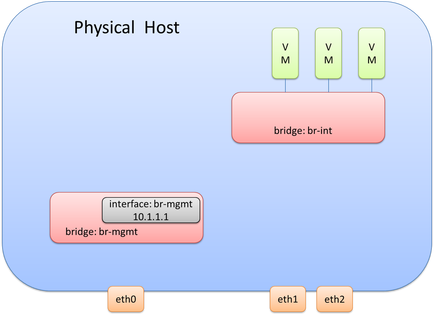

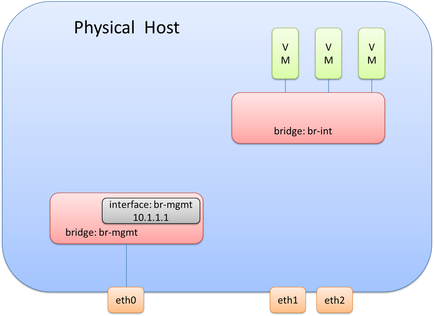

From a management interface perspective, we’ll also look at using OVS, although using the traditional configuration of assigning the interface the management IP Address directly is also a valid design/configuration. Since we won’t be connecting the management interface to br-int, we will need another OVS bridge to connect the mgmt. interface to. Let’s call this bridge ‘br-mgmt.’ This would look like the following diagram. Remember, we still haven’t covered eht1 and eth2, but that is coming.

Remember, the last thing we just did for br-mgmt was connect eth0 to br-mgmt.

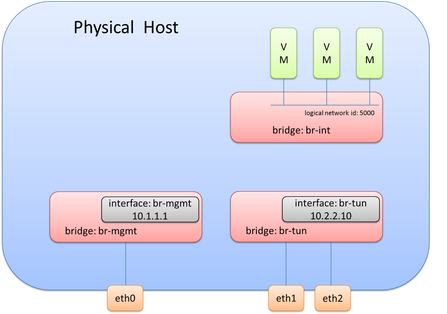

Now we need to actually do something similar with the physical interfaces that will be used for data (overlay transport), i.e. eth1 and eth2. As stated previously, it is generally good practice not to have any physical interfaces connected to br-int. This means we will need a separate bridge for eth1 and eth2. A bridge, often called br-tun in overlay solutions, can be created and eth1 and eth2 can be connected to this newly created bridge. This looks like so:

At this point, the OVS diagram would look like this following picture.

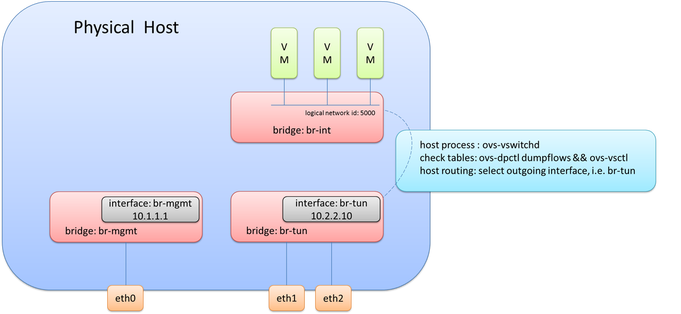

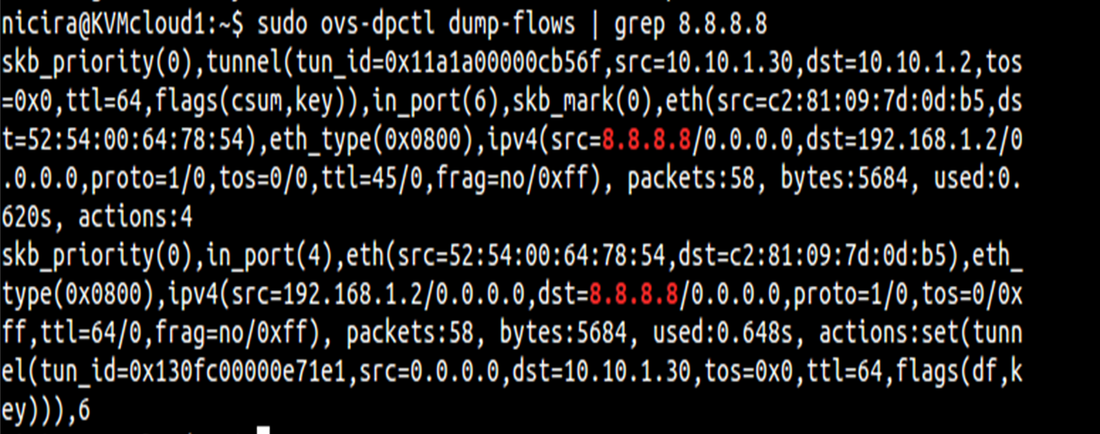

Note: This logical tunnel interface was programmatically provisioned by the NSX controller via OVSDB and could be of type STT, VXLAN, or GRE. In this case, it was actually STT (as you’ll see in the pictures below). This isn’t something you would do manually, unless of course, you aren’t using a central controller.

Okay, we now know the destination TEP and the logical interface to forward it out of, but what now? The interesting and very important piece of information to note is that the packets being forwarded now become a function of the host (hypervisor) itself. To be more specific, it will use the host process within OVS, ovs-vswitchd, to forward the traffic. And what this really means is that it will use the host routing table. If the destination/remote IP on the tunnel interface is X, the routing table will check how to get to X. Based on route recursion it will recurse to a directly connected interface, and this traffic will be egressing using the source IP address configured on the internal port previously configured on ‘br-tun’, i.e. the directly connected interface.

To bring this all together, the original frame and packet is encapsulated and as it egresses from br-tun, the source MAC/IP will be from the internal port on br-tun, the destination MAC will be the default gateway/router MAC on the segment (unless the remote IP is on the same segment), and the remote IP is the IP address of the tunnel endpoint (TEP) of the remote hypervisor.

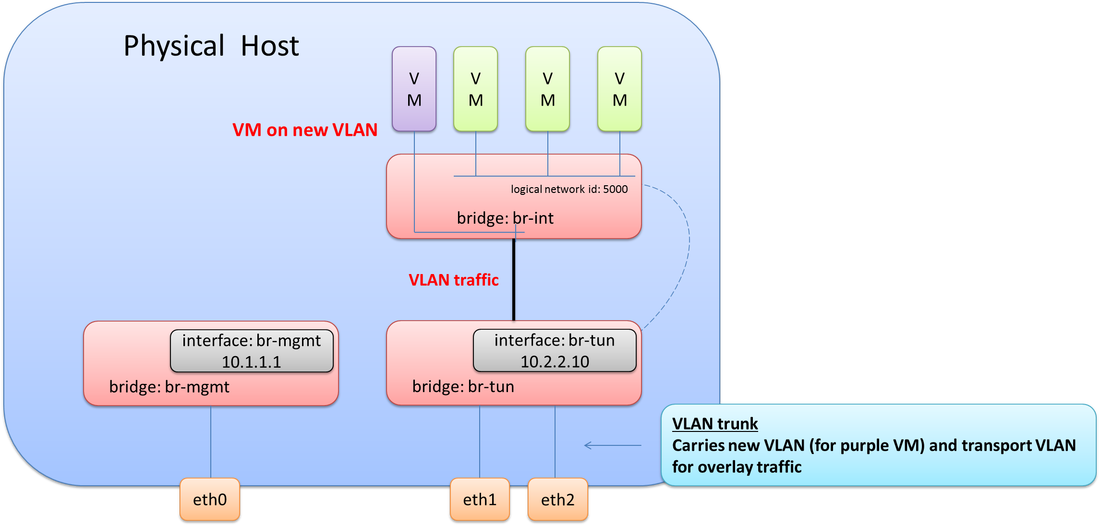

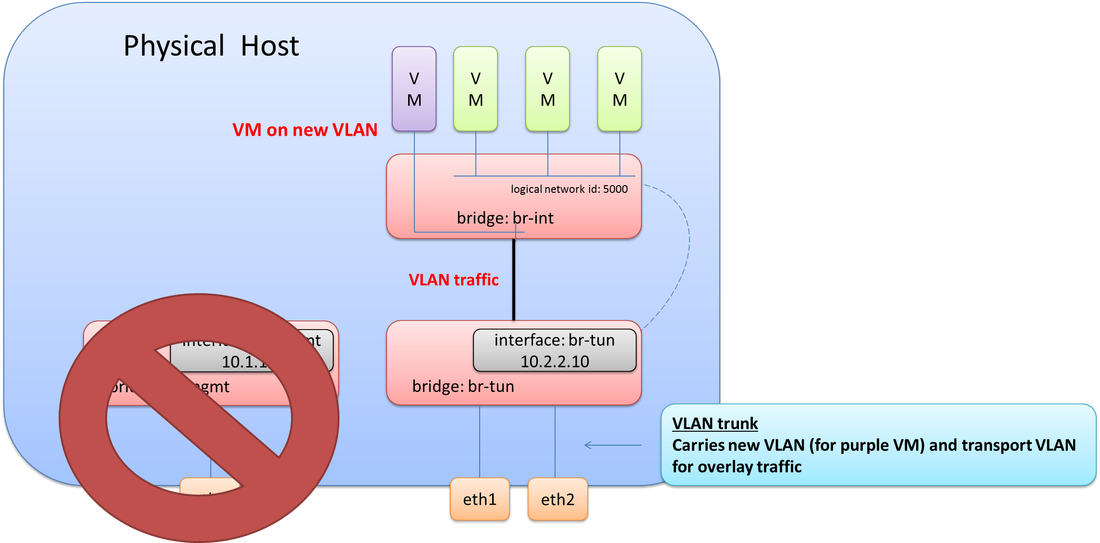

This design would be a working design for 100% overlay traffic, but what if you needed VMs to be on a VLAN on the same physical host that the overlay traffic is on.

Thinking this through, you may realize, an 802.1Q trunk would be required between eth1 & eth2 and the physical network, e.g. between br-tun and the physical network. This means at least two VLANs would need to be configured on br-tun. One VLAN for overlay transport (10.2.2.0/24) and one VLAN for the new VM(s) that was just added to host. This also means the VMs and internal port (that has the IP address) on br-tun need to be assigned to their proper respective VLANs. It would look like this. Note the connectivity now required between br-int and br-tun.

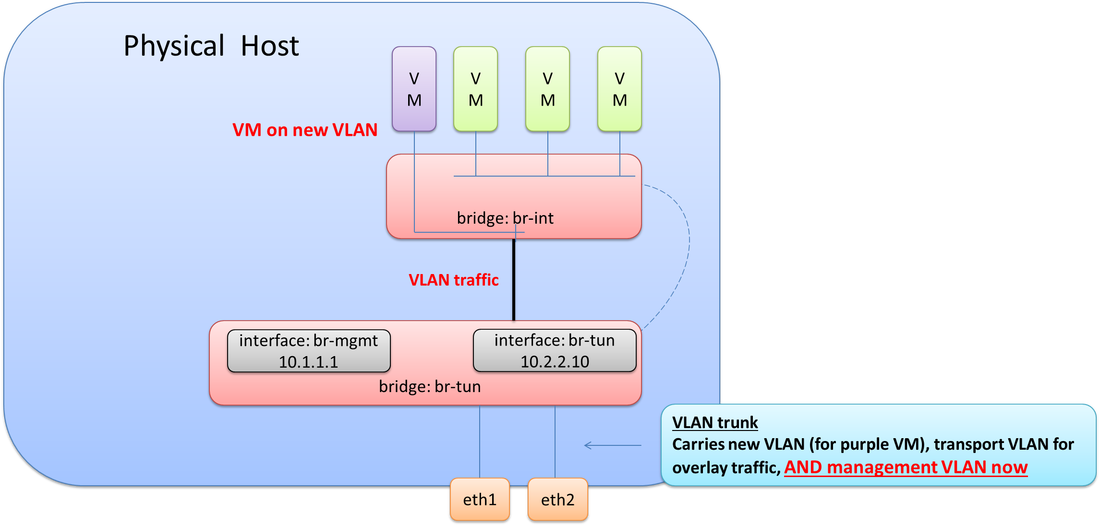

Let’s throw one more twist in this scenario. The management interface (eth0) went bad! We need to bring management connectivity back up. What does this look like?

And of course, if any of this may be incorrect, please do let me know and I'll get it updated ASAP.

Thanks,

Jason

Twitter: @jedelman8

Sample output of commands mentioned above:

RSS Feed

RSS Feed