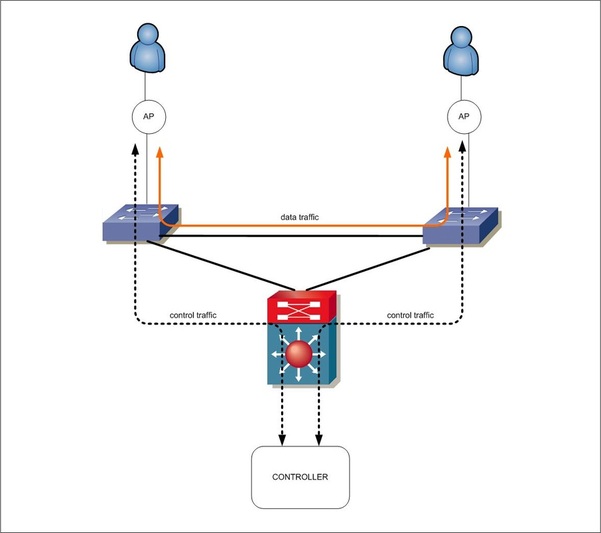

For those that aren’t familiar with Cisco HREAP, it is a design for Wireless LANs in which only control traffic gets tunneled back to the controller and the data traffic stays local on the switch. The IEEE protocol used to communicate between an AP and a controller is called CAPWAP. There are various use cases for the technology, not described here, but that is the 100,000 foot overview.

So, looking at the diagram below, we see a very basic implementation of HREAP.

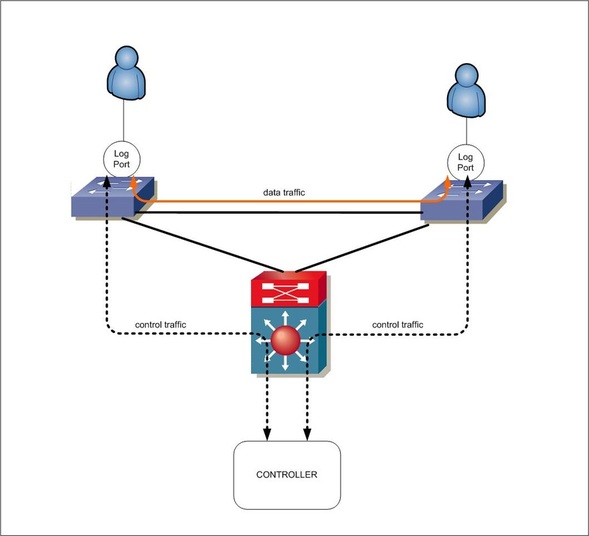

Throughout this post, I reference VLAN and AP interchangeably to further stress the point that a controller can control various types of devices – not just Access Points.

I’ve mentioned this in the past, and don’t know for a fact, but this is what I speculate Aruba to be doing as well after announcing their controller-based switches last year.

In this design by Aruba, or from any WLAN/Network manufacturer, imagine this logical port is now a VLAN. Assuming, we stayed in HREAP mode, control traffic is managed via a controller and data traffic is still handled by the local switches all by using CAPWAP. This deployment will offer unified management between Access Points and individual switches in the sense the same network policy can be applied to a user independent of the medium used. Why don’t we have some fun with this and call it a borderless network? ;)

While this design is extremely beneficial for simplifying management, there could still be flaws based on how large a given environment is. Still focusing on the HREAP design, these flaws could include instability due to extremely large broadcast domains that need to span every access layer switch, an increasingly large amount of MAC addresses that have to be learned on every switch, and possibly the biggest burden on the network team, the administrative overhead needed to create a dot1q trunk for every Access Point deployed. Imagine a network with 100s to 1000s of APs. That’s a lot of trunks and potentially a lot of VLANs that need to be configured for every port an AP plugs into and then manage them all on the downlinks back to the Core. Remember, this isn’t the case when tunneling all data back to the controller. But let’s be honest, we all know technology is cyclical. We’ll be back to pervasive HREAP-like deployments soon enough. Controllers won’t be able to handle all the traffic going through them, as an example.

Getting back on track, what if it was possible to have the benefits of an HREAP design like keeping traffic local to the switch, not having to tunnel data traffic through a controller, unified management, but eliminated the need to configure dot1q trunks on every logical port, and even had the ability to eliminate MAC learning between switches that connected to these HREAP enabled ports/VLANs for the LARGE deployments out there?

Unified Management is easily accomplished by not changing anything. CAPWAP can be used. Given the controller now has intelligence of the AP and Switch, this should be simplified such that when deploying particular SSIDs on an AP, VLANs are auto configured on the associated switch port. Since we are not talking about VMs and the *need* for L2 adjacency, VLAN sprawl and large MAC tables should not need to exist anyway. One SSID can map back to multiple VLANs, etc. but if there is a need and MAC tables explode, interestingly enough, it turns into a similar discussion that is currently happening in the data center.

If standard MAC learning is eliminated between switches, there still needs to be a way to map a source MAC to a source port and then a destination MAC to a destination switch or Node-ID. The information such as source MAC/port needs to be sent to the controller where a mapping database could be created and then distributed back to the switch creating some type of flow table. Sound familiar? If that’s deployed, then maybe we create CAPWAP data tunnels on demand between “logical ports” as needed. Could that work? Maybe. We already know APs can terminate CAPWAP data tunnels, but the question is how many? If an AP has enough horsepower to terminate CAPWAP tunnels, I’d bet a switch would be able to as well. Based on common LAN designs, this may not gain us much as it does between vswitches in the data center, but my goal was to try and use WLAN principles to create some good analogies for newer protocols and technologies in the Data Center such as VXLAN and OpenFlow.

What if we leveraged the controllers that already exist from the wireless world to control and map SSIDs, CAPWAP or VXLAN overlays directly on the switch or AP? This would allow for centralized management of any type of overlay, and would also allow for these overlays to be deployed between switches/APs when communicating with one another, but managed and controlled via your standard and existing network controller. CAPWAP data tunnels between devices in the Campus should suffice just fine where there aren’t 1000s of LANs – remember VXLAN is great because it eliminates the limitation of 4096 segments in cloud-scale data centers. I realize I may not have done the best job transitioning this into a DC and SDN discussion, but hopefully my points are coming across. If not, feel free to comment.

The protocol of choice in controller based wireless networks today is CAPWAP for the communication between controller’s and APs. A new protocol has been emerging called OpenFlow that has similarities to CAPWAP that is also used between controllers and APs/switches to centralize the control plane of a network. Some are going as far as calling this a Network Operating System running on the controller. I suppose I can buy into that.

FINALLY…THE MAJOR QUESTIONS

Is it easier to integrate OpenFlow into every switch and AP out there for a unified approach to distributing the control and data planes of network devices, or is it easier to modify an existing IEEE standard (CAPWAP) and create extensions that offer what OpenFlow brings to the table being able to manipulate flows, etc. OpenFlow is spoken about as being a very “simple” concept. If it’s that simple and low-level, just integrate it to CAPWAP. Vendors like Aruba and Meraki are already able to control switches via their “CONTROLLER.” It would seem to me manipulating CAPWAP would be the approach to take, but hey, what do I know? This would also ease transitions into a controller based DC or LAN. Maybe we end up with a single controller running OpenFlow, CAPWAP, and the next new protocol being a multi-control plane protocol “network controller” that offers an insane amount of flexibility and programmability.

The most important thing to remember is that it’s about controller based, or programmable networks, not the protocol that is actually used to achieve it.

@jedelman8

RSS Feed

RSS Feed