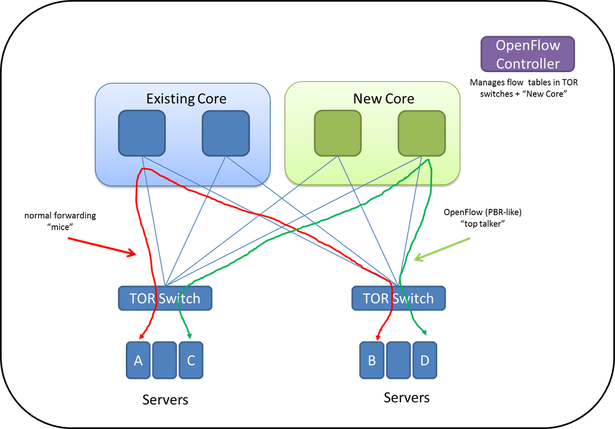

- Start with your current core – let’s assume it’s Layer 2 between the TOR and existing Core/Spine as that is what I see the most with my customers.

- Deploy a top of rack switch that has at least 4 uplinks and also supports OpenFlow running in hybrid mode. Hybrid mode here will be defined as having the ability to forward traffic as normal if there is no match in the OpenFlow table. This is the way HP deploys Sentinel and their controller integration with Lync for dynamic QOS. This approach allows for Switch when you can, OpenFlow when you must --- or you may call this PBR in hardware.

- Two of the uplinks will connect to your current core. These can be 1GE, 10GE, or 40GE --- all based on your requirements. This network will handle all traditional, random, and bursty traffic.

- Two of the uplinks will connect to your new core. This network will handle all well-known elephant flows. These will be pre-determined for now, although with a bit more automation and understanding of the environment, this can be dynamic.

- In the diagram shown, A to B and B to A will forward across the current core as normal using standard MAC learning.

- The uplinks that connect to the New Core can be configured as /30 routed links. The /30’s will help ease into a deployment like this although they aren’t required.

Because C to D will be a known vMotion or maybe a backup (the important piece is that it is a known communication), an OpenFlow rule will be configured on the TOR that C connects to. The OpenFlow rule (to keep things simple) will be anything sourced from MAC C will have an action to forward out one of the uplinks connecting to the new Core. Similarly, rules will be updated accordingly in the New Core as well as the TOR that connects to D. The action used could also be an L2 re-write egress the TOR to the New Core, which wouldn’t require the New Core to be OpenFlow enabled. It also makes use of the /30s. Details…

This keeps things simple using the source MAC as the matching condition. If there are smaller flows between those two hosts as well, they will traverse the New Core too. Is that a problem? Let’s not boil the ocean.

It is a place in the network like this where Affinities can be used in traditional leaf/spine architectures down the road so we aren't beholden to MAC addresses. Or if you want to call them End Point Groups, that’s fine too. Ideally, you can use IP, Hostname, DSCP, VM attribute, etc. to define the groupings that then map back to OpenFlow rules with associated actions.

For now, this design is meant to keep it simple and look at the benefits of a hybrid OpenFlow network vs. 100% OpenFlow based from vSwitch to TOR to Core. Remember, in this hybrid approach, if there is no OpenFlow match, the traffic would be forwarded as normal. This should alleviate concerns of flow table size, controller setup flows/sec, and the amount of PacketIn’s that can be sent from a TOR switch to the controller. As hardware and software continue to mature, the exact flow needed (n-tuple) can be redirected to your other Core based on much more than just MAC address. Of course, it can today, but it all comes down see if the common OpenFlow concerns are relevant for your particular environment.

On a side note – what hardware and software could support such a network? Some companies are advocating for 100% OpenFlow network like NEC and Big Switch. A company like Cumulus isn’t supporting an OpenFlow agent. I don’t think Cisco supports hybrid mode. I’m sure HP could do this, but any other company? Maybe Brocade? That still leaves Pica8, Extreme, Dell, Arista, and Juniper to confirm. Have at it and let me know! :)

Thanks,

Jason

Twitter: @jedelman8

RSS Feed

RSS Feed